4.6 KiB

English | 中文

Features

🌍 Chinese supported mandarin and tested with multiple datasets: aidatatang_200zh, magicdata, aishell3

🤩 PyTorch worked for pytorch, tested in version of 1.9.0(latest in August 2021), with GPU Tesla T4 and GTX 2060

🌍 Windows + Linux tested in both Windows OS and linux OS after fixing nits

🤩 Easy & Awesome effect with only newly-trained synthesizer, by reusing the pretrained encoder/vocoder

DEMO VIDEO

Quick Start

1. Install Requirements

Follow the original repo to test if you got all environment ready. **Python 3.7 or higher ** is needed to run the toolbox.

- Install PyTorch.

If you get an

ERROR: Could not find a version that satisfies the requirement torch==1.9.0+cu102 (from versions: 0.1.2, 0.1.2.post1, 0.1.2.post2 )This error is probably due to a low version of python, try using 3.9 and it will install successfully

- Install ffmpeg.

- Run

pip install -r requirements.txtto install the remaining necessary packages. - Install webrtcvad

pip install webrtcvad-wheels(If you need)

Note that we are using the pretrained encoder/vocoder but synthesizer, since the original model is incompatible with the Chinese sympols. It means the demo_cli is not working at this moment.

2. Train synthesizer with your dataset

- Download aidatatang_200zh or other dataset and unzip: make sure you can access all .wav in train folder

- Preprocess with the audios and the mel spectrograms:

python synthesizer_preprocess_audio.py <datasets_root>Allow parameter--dataset {dataset}to support adatatang_200zh, magicdata, aishell3

If it happens

the page file is too small to complete the operation, please refer to this video and change the virtual memory to 100G (102400), for example : When the file is placed in the D disk, the virtual memory of the D disk is changed.

-

Preprocess the embeddings:

python synthesizer_preprocess_embeds.py <datasets_root>/SV2TTS/synthesizer -

Train the synthesizer:

python synthesizer_train.py mandarin <datasets_root>/SV2TTS/synthesizer -

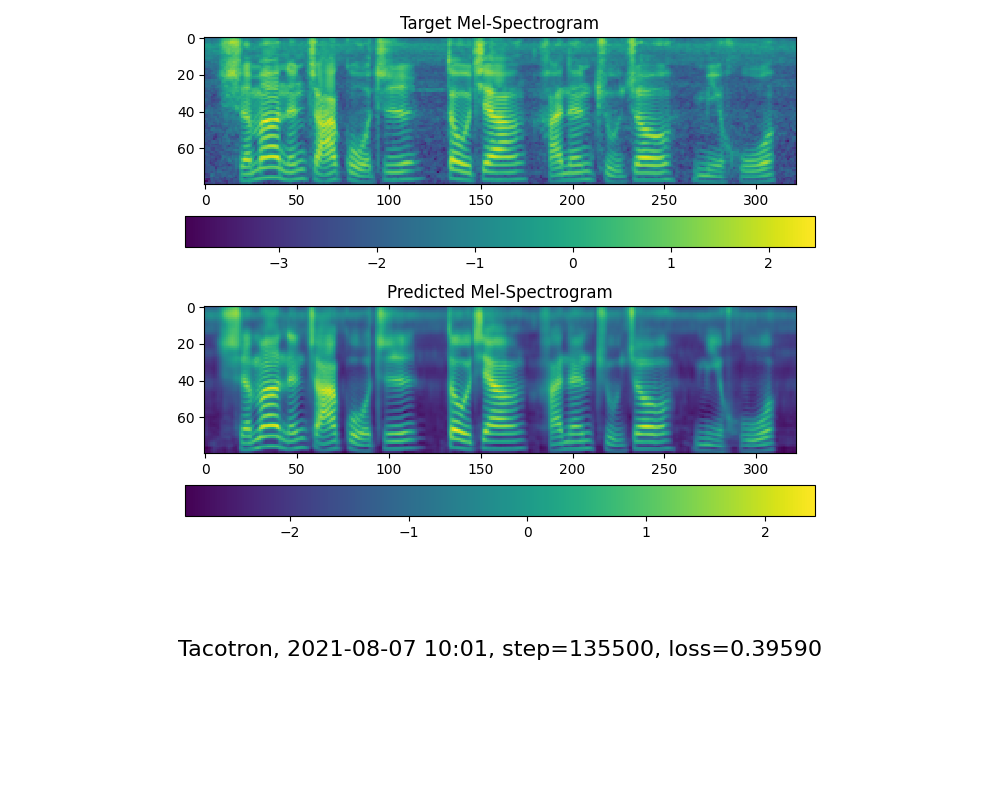

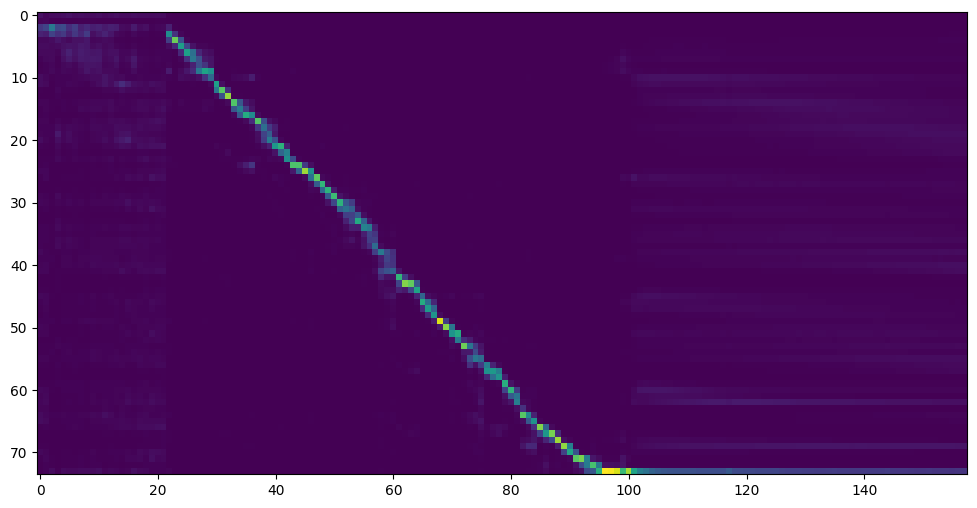

Go to next step when you see attention line show and loss meet your need in training folder synthesizer/saved_models/.

FYI, my attention came after 18k steps and loss became lower than 0.4 after 50k steps.

2.2 Use pretrained model of synthesizer

Thanks to the community, some models will be shared:

| author | Download link | Previow Video |

|---|---|---|

| @miven | https://pan.baidu.com/s/1PI-hM3sn5wbeChRryX-RCQ code:2021 | https://www.bilibili.com/video/BV1uh411B7AD/ |

A link to my early trained model: Baidu Yun Code:aid4

3. Launch the Toolbox

You can then try the toolbox:

python demo_toolbox.py -d <datasets_root>

or

python demo_toolbox.py

Good news🤩: Chinese Characters are supported

TODO

- Add demo video

- Add support for more dataset

- Upload pretrained model

- Support parallel tacotron

- Service orianted and docterize

- 🙏 Welcome to add more

Reference

This repository is forked from Real-Time-Voice-Cloning which only support English.

| URL | Designation | Title | Implementation source |

|---|---|---|---|

| 1806.04558 | SV2TTS | Transfer Learning from Speaker Verification to Multispeaker Text-To-Speech Synthesis | This repo |

| 1802.08435 | WaveRNN (vocoder) | Efficient Neural Audio Synthesis | fatchord/WaveRNN |

| 1703.10135 | Tacotron (synthesizer) | Tacotron: Towards End-to-End Speech Synthesis | fatchord/WaveRNN |

| 1710.10467 | GE2E (encoder) | Generalized End-To-End Loss for Speaker Verification | This repo |