mirror of

https://github.com/babysor/MockingBird.git

synced 2024-03-22 13:11:31 +08:00

commit

9f30ca8e92

|

|

@ -34,6 +34,8 @@

|

|||

可以传入参数 --dataset `{dataset}` 支持 adatatang_200zh, magicdata

|

||||

> 假如你下载的 `aidatatang_200zh`文件放在D盘,`train`文件路径为 `D:\data\aidatatang_200zh\corpus\train` , 你的`datasets_root`就是 `D:\data\`

|

||||

|

||||

>假如發生 `頁面文件太小,無法完成操作`,請參考這篇[文章](https://blog.csdn.net/qq_17755303/article/details/112564030),將虛擬內存更改為100G(102400),例如:档案放置D槽就更改D槽的虚拟内存

|

||||

|

||||

* 预处理嵌入:

|

||||

`python synthesizer_preprocess_embeds.py <datasets_root>/SV2TTS/synthesizer`

|

||||

|

||||

|

|

@ -48,8 +50,8 @@

|

|||

### 2.2 使用预先训练好的合成器

|

||||

> 实在没有设备或者不想慢慢调试,可以使用网友贡献的模型(欢迎持续分享):

|

||||

|

||||

| 作者 | 下载链接 | 效果预览 |

|

||||

| --- | ----------- | ----- |

|

||||

| 作者 | 下载链接 | 效果预览 |

|

||||

| --- | ----------- | ----- |

|

||||

|@miven| https://pan.baidu.com/s/1PI-hM3sn5wbeChRryX-RCQ 提取码:2021 | https://www.bilibili.com/video/BV1uh411B7AD/

|

||||

|

||||

### 3. 启动工具箱

|

||||

|

|

|

|||

20

README.md

20

README.md

|

|

@ -3,14 +3,14 @@

|

|||

[](http://choosealicense.com/licenses/mit/)

|

||||

> This repository is forked from [Real-Time-Voice-Cloning](https://github.com/CorentinJ/Real-Time-Voice-Cloning) which only support English.

|

||||

|

||||

> English | [中文](README-CN.md)

|

||||

> English | [中文](README-CN.md)

|

||||

|

||||

## Features

|

||||

🌍 **Chinese** supported mandarin and tested with multiple datasets: aidatatang_200zh, magicdata

|

||||

|

||||

🤩 **PyTorch** worked for pytorch, tested in version of 1.9.0(latest in August 2021), with GPU Tesla T4 and GTX 2060

|

||||

|

||||

🌍 **Windows + Linux** tested in both Windows OS and linux OS after fixing nits

|

||||

🌍 **Windows + Linux** tested in both Windows OS and linux OS after fixing nits

|

||||

|

||||

🤩 **Easy & Awesome** effect with only newly-trained synthesizer, by reusing the pretrained encoder/vocoder

|

||||

|

||||

|

|

@ -27,19 +27,23 @@

|

|||

> If you get an `ERROR: Could not find a version that satisfies the requirement torch==1.9.0+cu102 (from versions: 0.1.2, 0.1.2.post1, 0.1.2.post2 )` This error is probably due to a low version of python, try using 3.9 and it will install successfully

|

||||

* Install [ffmpeg](https://ffmpeg.org/download.html#get-packages).

|

||||

* Run `pip install -r requirements.txt` to install the remaining necessary packages.

|

||||

* Install webrtcvad `pip install webrtcvad-wheels`(If you need)

|

||||

> Note that we are using the pretrained encoder/vocoder but synthesizer, since the original model is incompatible with the Chinese sympols. It means the demo_cli is not working at this moment.

|

||||

### 2. Train synthesizer with your dataset

|

||||

* Download aidatatang_200zh or SLR68 dataset and unzip: make sure you can access all .wav in *train* folder

|

||||

* Preprocess with the audios and the mel spectrograms:

|

||||

`python synthesizer_preprocess_audio.py <datasets_root>`

|

||||

Allow parameter `--dataset {dataset}` to support adatatang_200zh, magicdata

|

||||

|

||||

>If it happens `the page file is too small to complete the operation`, please refer to this [video](https://www.youtube.com/watch?v=Oh6dga-Oy10&ab_channel=CodeProf) and change the virtual memory to 100G (102400), for example : When the file is placed in the D disk, the virtual memory of the D disk is changed.

|

||||

|

||||

* Preprocess the embeddings:

|

||||

`python synthesizer_preprocess_embeds.py <datasets_root>/SV2TTS/synthesizer`

|

||||

|

||||

* Train the synthesizer:

|

||||

`python synthesizer_train.py mandarin <datasets_root>/SV2TTS/synthesizer`

|

||||

|

||||

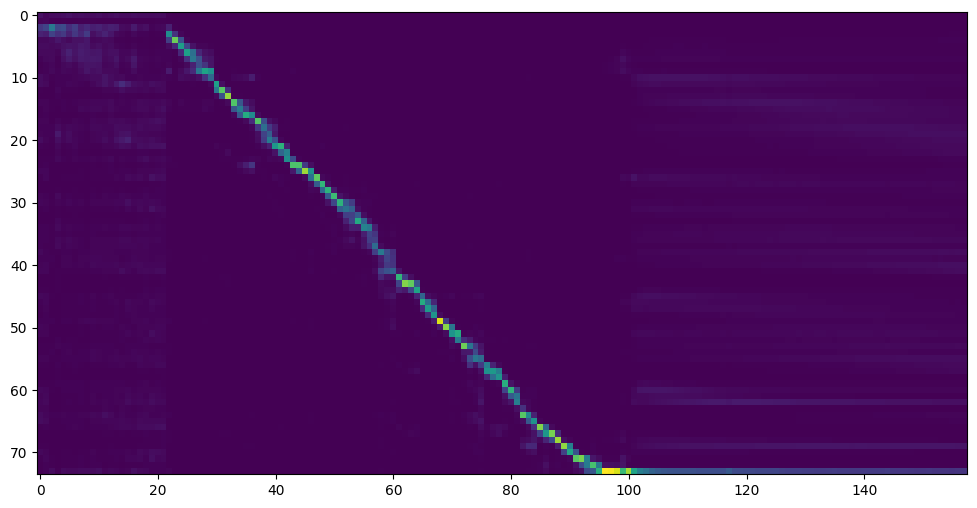

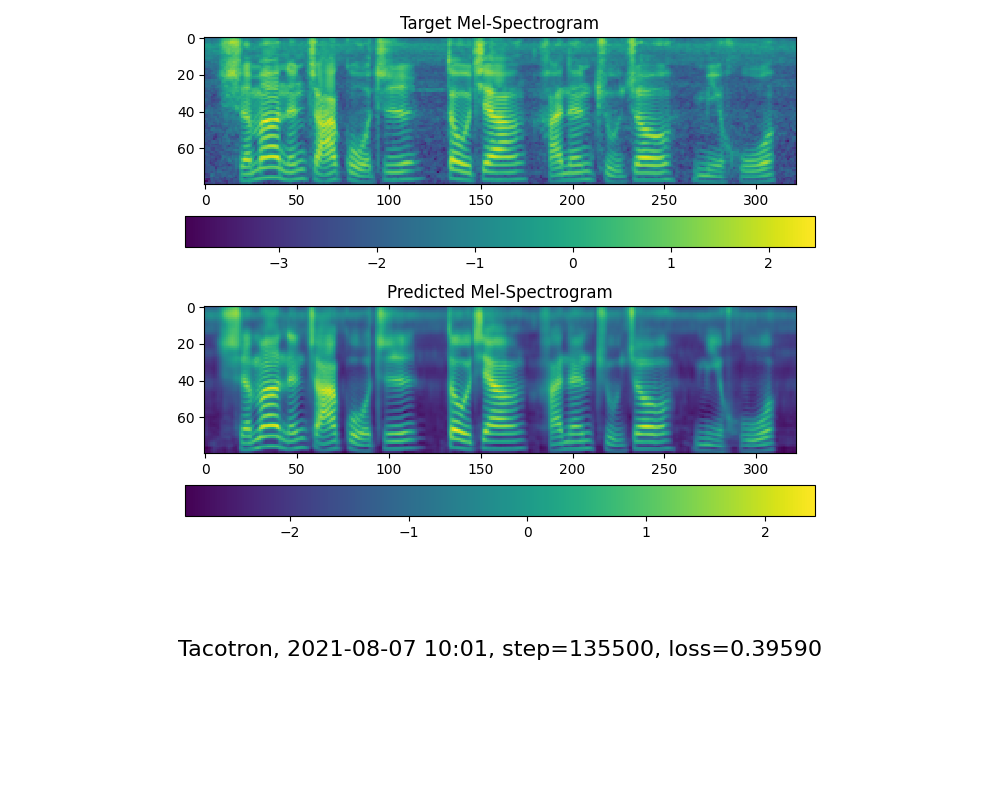

* Go to next step when you see attention line show and loss meet your need in training folder *synthesizer/saved_models/*.

|

||||

* Go to next step when you see attention line show and loss meet your need in training folder *synthesizer/saved_models/*.

|

||||

> FYI, my attention came after 18k steps and loss became lower than 0.4 after 50k steps.

|

||||

|

||||

|

||||

|

|

@ -47,8 +51,8 @@ Allow parameter `--dataset {dataset}` to support adatatang_200zh, magicdata

|

|||

### 2.2 Use pretrained model of synthesizer

|

||||

> Thanks to the community, some models will be shared:

|

||||

|

||||

| author | Download link | Previow Video |

|

||||

| --- | ----------- | ----- |

|

||||

| author | Download link | Previow Video |

|

||||

| --- | ----------- | ----- |

|

||||

|@miven| https://pan.baidu.com/s/1PI-hM3sn5wbeChRryX-RCQ code:2021 | https://www.bilibili.com/video/BV1uh411B7AD/

|

||||

|

||||

> A link to my early trained model: [Baidu Yun](https://pan.baidu.com/s/10t3XycWiNIg5dN5E_bMORQ)

|

||||

|

|

@ -56,9 +60,9 @@ Code:aid4

|

|||

### 3. Launch the Toolbox

|

||||

You can then try the toolbox:

|

||||

|

||||

`python demo_toolbox.py -d <datasets_root>`

|

||||

or

|

||||

`python demo_toolbox.py`

|

||||

`python demo_toolbox.py -d <datasets_root>`

|

||||

or

|

||||

`python demo_toolbox.py`

|

||||

|

||||

> Good news🤩: Chinese Characters are supported

|

||||

|

||||

|

|

|

|||

Loading…

Reference in New Issue

Block a user