mirror of

https://github.com/babysor/MockingBird.git

synced 2024-03-22 13:11:31 +08:00

Built-in pretrained encoder/vocoder model 简化配置流程,预集成模型

This commit is contained in:

parent

b73dc6885c

commit

57b06a29ec

4

.gitignore

vendored

4

.gitignore

vendored

|

|

@ -15,6 +15,4 @@

|

|||

*.toc

|

||||

*.wav

|

||||

*.sh

|

||||

encoder/saved_models/*

|

||||

synthesizer/saved_models/*

|

||||

vocoder/saved_models/*

|

||||

synthesizer/saved_models/*

|

||||

20

README-CN.md

20

README-CN.md

|

|

@ -25,16 +25,7 @@

|

|||

* 安装 [ffmpeg](https://ffmpeg.org/download.html#get-packages)。

|

||||

* 运行`pip install -r requirements.txt` 来安装剩余的必要包。

|

||||

|

||||

### 2. 使用预训练好的编码器/声码器

|

||||

下载[预训练模型](https://github.com/CorentinJ/Real-Time-Voice-Cloning/wiki/Pretrained-models),解压下载内容,并复制`encoder`与`vocoder`下的`saved_models`到本代码库的相应目录下

|

||||

|

||||

确保得到以下文件:

|

||||

```

|

||||

encoder\saved_models\pretrained.pt

|

||||

vocoder\saved_models\pretrained\pretrained.pt

|

||||

```

|

||||

|

||||

### 3. 使用数据集训练合成器

|

||||

### 2. 使用数据集训练合成器

|

||||

* 下载 数据集并解压:确保您可以访问 *train* 文件夹中的所有音频文件(如.wav)

|

||||

* 使用音频和梅尔频谱图进行预处理:

|

||||

`python synthesizer_preprocess_audio.py <datasets_root>`

|

||||

|

|

@ -50,14 +41,15 @@ vocoder\saved_models\pretrained\pretrained.pt

|

|||

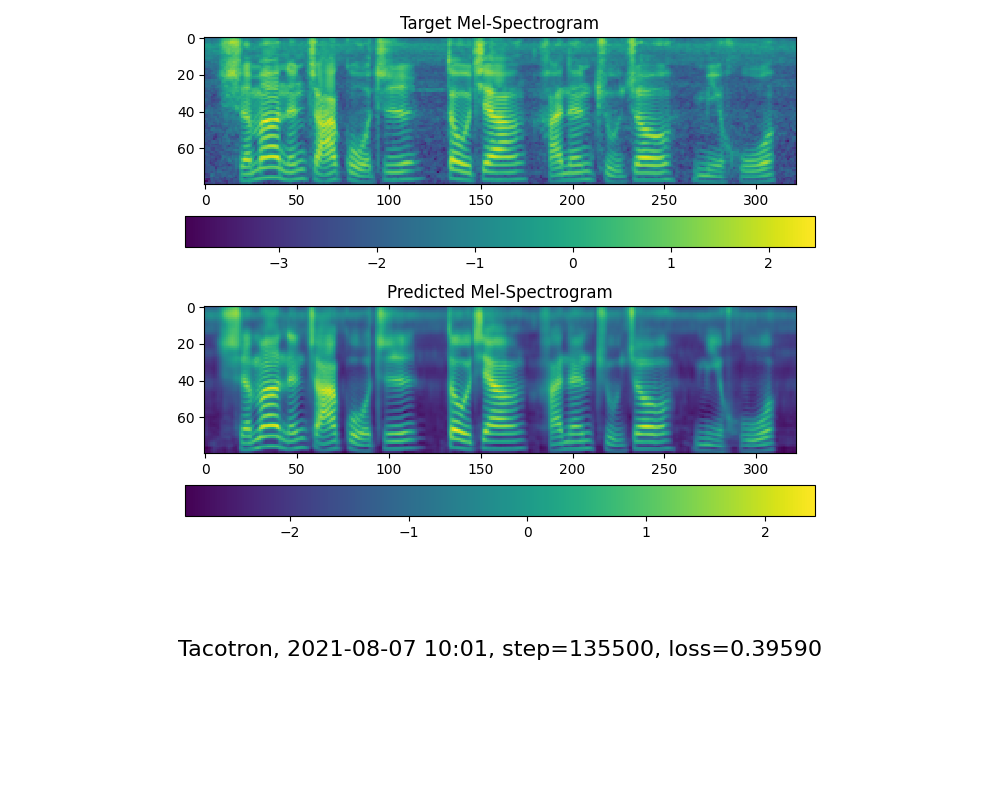

* 当您在训练文件夹 *synthesizer/saved_models/* 中看到注意线显示和损失满足您的需要时,请转到下一步。

|

||||

> 仅供参考,我的注意力是在 18k 步之后出现的,并且在 50k 步之后损失变得低于 0.4。

|

||||

|

||||

|

||||

### 4. 启动工具箱

|

||||

### 3. 启动工具箱

|

||||

然后您可以尝试使用工具箱:

|

||||

`python demo_toolbox.py -d <datasets_root>`

|

||||

|

||||

## TODO

|

||||

- [ ] 允许直接使用中文

|

||||

- [X] 允许直接使用中文

|

||||

- [X] 添加演示视频

|

||||

- [X] 添加对更多数据集的支持

|

||||

- [ ] 上传预训练模型

|

||||

- [X] 上传预训练模型

|

||||

- [ ] 支持parallel tacotron

|

||||

- [ ] 服务化与容器化

|

||||

- [ ] 🙏 欢迎补充

|

||||

|

|

|

|||

12

README.md

12

README.md

|

|

@ -26,12 +26,8 @@

|

|||

* Install [PyTorch](https://pytorch.org/get-started/locally/).

|

||||

* Install [ffmpeg](https://ffmpeg.org/download.html#get-packages).

|

||||

* Run `pip install -r requirements.txt` to install the remaining necessary packages.

|

||||

|

||||

### 2. Reuse the pretrained encoder/vocoder

|

||||

* Download the following models and extract the encoder and vocoder models to the according directory of this project. Don't use the synthesizer

|

||||

https://github.com/CorentinJ/Real-Time-Voice-Cloning/wiki/Pretrained-models

|

||||

> Note that we need to specify the newly trained synthesizer model, since the original model is incompatible with the Chinese sympols. It means the demo_cli is not working at this moment.

|

||||

### 3. Train synthesizer with your dataset

|

||||

> Note that we are using the pretrained encoder/vocoder but synthesizer, since the original model is incompatible with the Chinese sympols. It means the demo_cli is not working at this moment.

|

||||

### 2. Train synthesizer with your dataset

|

||||

* Download aidatatang_200zh or SLR68 dataset and unzip: make sure you can access all .wav in *train* folder

|

||||

* Preprocess with the audios and the mel spectrograms:

|

||||

`python synthesizer_preprocess_audio.py <datasets_root>`

|

||||

|

|

@ -48,7 +44,7 @@ Allow parameter `--dataset {dataset}` to support adatatang_200zh, SLR68

|

|||

|

||||

> A link to my early trained model: [Baidu Yun](https://pan.baidu.com/s/10t3XycWiNIg5dN5E_bMORQ)

|

||||

Code:aid4

|

||||

### 4. Launch the Toolbox

|

||||

### 3. Launch the Toolbox

|

||||

You can then try the toolbox:

|

||||

|

||||

`python demo_toolbox.py -d <datasets_root>`

|

||||

|

|

@ -59,4 +55,6 @@ or

|

|||

- [x] Add demo video

|

||||

- [X] Add support for more dataset

|

||||

- [X] Upload pretrained model

|

||||

- [ ] Support parallel tacotron

|

||||

- [ ] Service orianted and docterize

|

||||

- 🙏 Welcome to add more

|

||||

|

|

|

|||

BIN

encoder/saved_models/pretrained.pt

Normal file

BIN

encoder/saved_models/pretrained.pt

Normal file

Binary file not shown.

BIN

vocoder/saved_models/pretrained/pretrained.pt

Normal file

BIN

vocoder/saved_models/pretrained/pretrained.pt

Normal file

Binary file not shown.

Loading…

Reference in New Issue

Block a user